Hydra gen molecule#

Mon Jul 30 22:48:15 2018

Two things this week

I got hydra running on my build machine, so I can start doing

regression tests now that things work well enough that I ought to

worry about breaking them. (Note cavalier insertion of "ought to" in

the preceding sentence)

Provided you are running Nixos, this is easier than you'd think from

looking at the Hydra manual, because there is a Nixos module for it.

First, add this or something like it to your configuration.nix and rebuild

services.hydra = {

enable = true;

hydraURL = "http://${hostname}:3000/";

notificationSender = "dan@telent.net";

buildMachinesFiles = [];

};

Second, reboot, or logout and login, or ensure in some other way that

your shell has sourced the HYDRA_* variables which the previous step

added to /etc/profile. Otherwise step 3 will fail to do what it

should do and the error messages will be entirely unhelpful

[dan@loaclhost:~]$ grep HYDRA /etc/profile

export HYDRA_CONFIG="/var/lib/hydra/hydra.conf"

export HYDRA_DATA="/var/lib/hydra"

export HYDRA_DBI="dbi:Pg:dbname=hydra;user=hydra;"

Third, refer to the instructions in the Hydra

manual starting

where it says to run hydra-init then hydra-create-user. The

previous steps were already done by the module. Also, there are

systemd services to run the server, the evaluator and the queue

runner, so ignore anything that says you should start them by hand.

Note the empty array for buildMachinesFiles - this was important on

my machine and probably is important on yours too. If you don't have

it, when eventually you get all your projects and jobsets apparently

working properly and evaluating without error, you will find that the

jobs sit in the queue and never get run, because something something

bad defaults no queue runner machines something something.

Things I read wherein I found the solutions to my problems:

- https://gist.github.com/joepie91/c26f01a787af87a96f967219234a8723 how to set it up as a module

- https://github.com/NixOS/hydra/issues/430 why the empty buildMachinesFiles

My Hydra instance is private and destined to remain so, at least for now.

Some refactoring of the kernel derivation into three parts:

unpacking the tree and applying LEDE/OpenWRT patches; building

vmlinux; and applying the DTB and making a uImage. I think this is

an improvement: it will certainly make a few things (like running

qemu, or changing the command line) more convenient, but I'm not sure

I have it exactly right yet.

My current TODO list: as you will see, everything that has happened

recently has been procrastination on the top goal.

* in-place upgrade

** kexec boot

*** boot current kernel with extra memmap reservation

*** when memmap space detected, copy rootfs into it and boot new kernel with phram root

** make nandwrite work

* DONE split kernel derivation into generic kernel / dtb + uimage

* make backuphost actually work on mt300n

* make pppoe work

* DONE CI build (hydra?)

* make resolv.conf from dhcp not copied from build system

Shell out tour#

Wed Aug 8 11:51:52 2018

Topics:

Nothing to show this week. I have more or less proved to my own

satisfaction that I can reboot into a new image using kexec and a

small C program and some shell scripts. This came at at considerable

personal mental cost, but that's what happens when trying to do text

processing in a Bourne shell (not bash) script without falling back on

awk or sed (not installed). Associative arrays would have been nice.

Actually, just arrays in general would have been a help.

The C program is called writemem and is approximately the moral

equivalent of cat | dd seek=N of=/dev/mem bs=1 except that it writes

in blocks bigger than 1 byte. Just the kind of thing your security

auditor wants to find left lying around on random systems, yeah. I

can see a need for some proper thought on security posture in the near

future: although no-web-interface and

ssh-only-with-a-pubkey-embedded-at-build-time probably makes it less

of a target than any consumer D-Linksysgear box in its default

configuration, there's probably still a lot more to do on that front.

The attack we want to protect against is (1) being able to write to

random locations in physical memory; (2) being able to reboot into

random kernels using kexec; (3) being able to flash anything we like;

(4) all of the above. Probably (4)

There will be one user-visible change when this stuff lands: whereas

previously we produced separate files for kernel and rootfs when doing

a "development" build, now we make a single agglomerated firmware

image and rely on the kernel mtdsplit

code to find the root

filesystem. This is because step 1 of the headless upgrade

procedure is

to reboot into the current kernel with an additional memmap

parameter, so in the case that the current kernel is running from RAM

we need the original uImage to still be accessible and not to have

been overwritten since boot. It also makes the build a bit more

consistent between dev and production, which is a nice side effect.

First things first, though: need to get it into a state where I can

actually commit something. Last night I dreamt I was in a

bacon-eating competition where the goal was to consume as much as

possible during a MongoDB cluster election before a new primary was

chosen, but I woke up before the contest finished. I mention this

just to give you an idea of where my brain is right now, but it is

probably not a very good idea.

notmuch to say#

Thu Aug 16 10:57:46 2018

Very quick one this week, because it's all a bit mad round here right now:

If you are (as I am) using postfix to send and receive mail, and if

you are (as I am) using rspamd to identify spam emails, and if you are

(as I am) using notmuch to index and search it, you may have noticed

(as I did) that notmuch can't add tags based on the presence of the X-Spam

header that rspamd adds.

This is what I did:

1) I made postfix do local delivery through maildrop

services.postfix = {

extraConfig = ''

[ ... ]

mailbox_command = ${pkgs.maildrop}/bin/maildrop -d ''${USER}

'';

}

This is for NixOS, obviously, if you are not using Nix then make the equivalent change in

/etc/postfix/main.cf

maildrop will default to delivering the mail as normal if it can't

find a $HOME/.mailfilter file, so once you have established that its

idea of "normal" matches yours then this ought to be a safe change to

make for all your users

2) Added a .mailfilter file to run notmuch insert, which accepts a

message on standard input, adds it to the notmuch maildir and indexes it - with the specified tags

[dan@vritual:~]$ cat ~/.mailfilter

if(/X-Spam.+yes/:H)

to "|/home/dan/.nix-profile/bin/notmuch insert +spam -inbox"

to "|/home/dan/.nix-profile/bin/notmuch insert -inbox"

I don't manage my my home directory declaratively - if you are running

NixOS and have home-manager or something like that, then you can make

this change in a more Nixy way.

3) Profit.

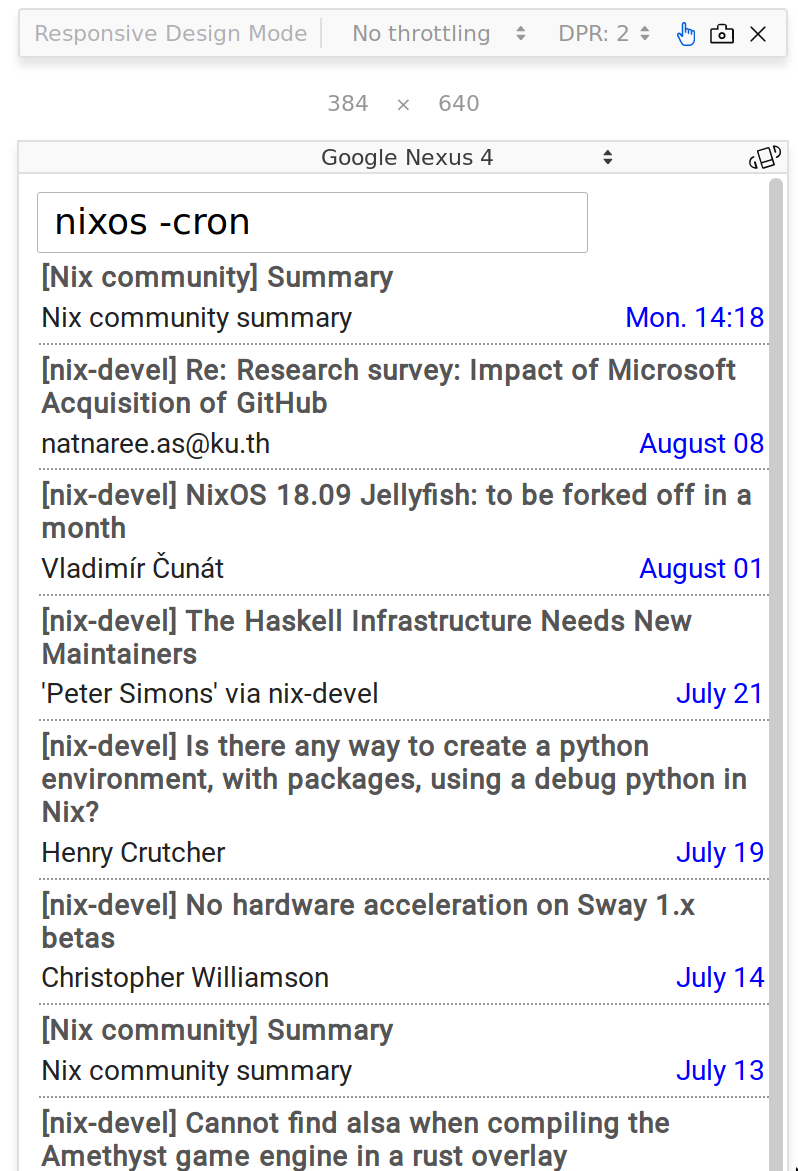

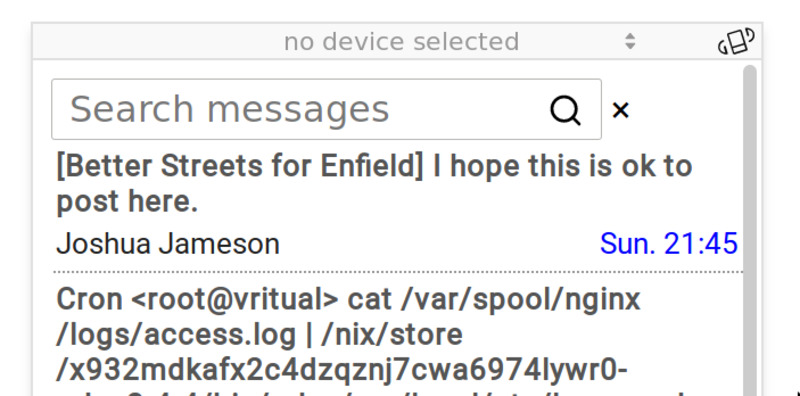

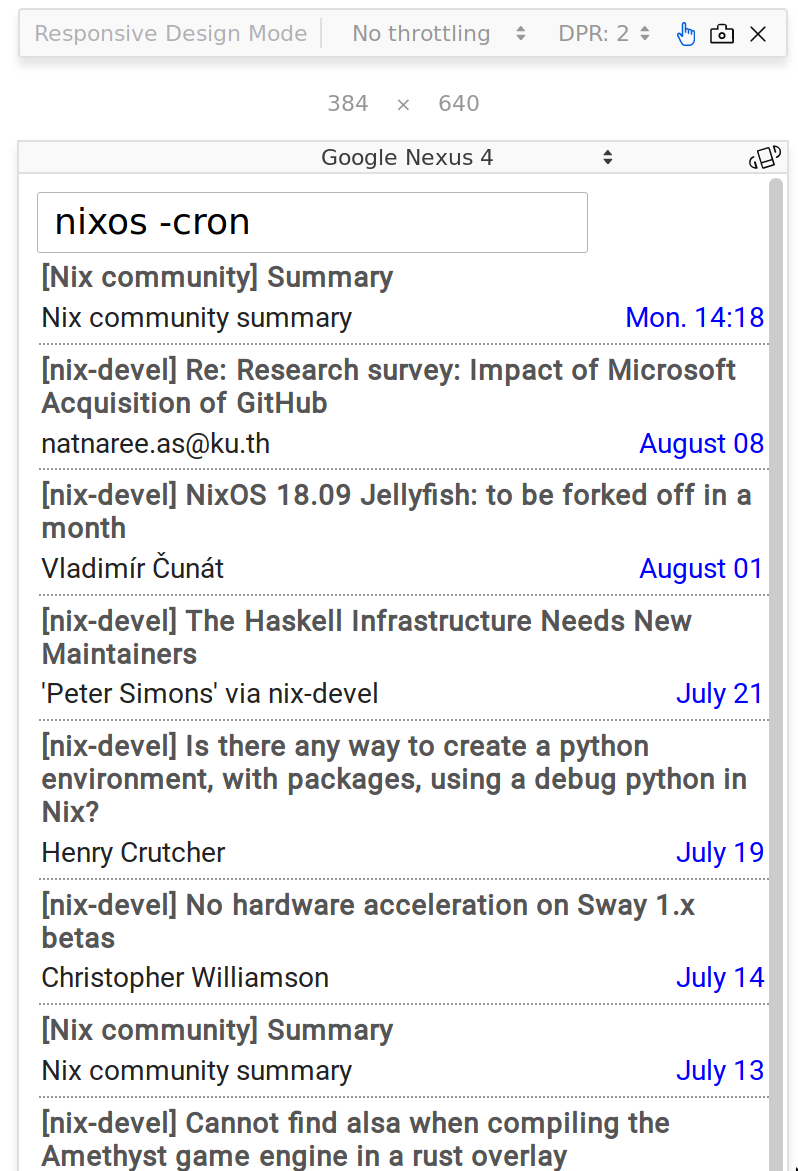

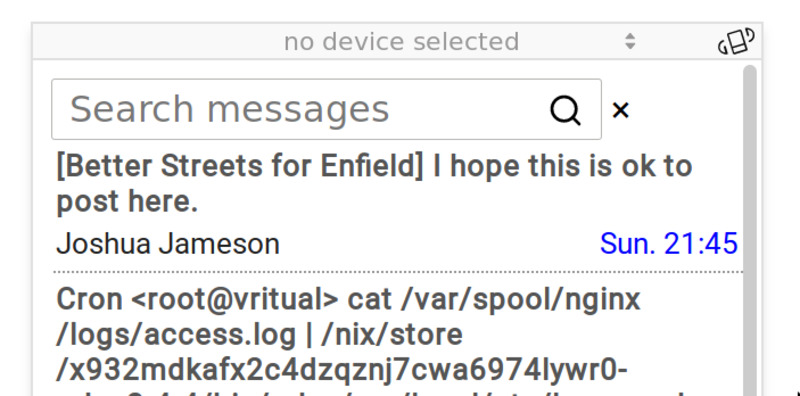

In not-really-other news, I embarked on a quick let's-learn re-frame

hack to build a mobile-frendly notmuch web interface. It's not quite

at "works for me" level yet, and is certainly not at "you can run this

on a network" level (it's running the notmuch command with

user-supplied arguments ..) but there has been progress.

Gardening at night#

Wed Aug 22 22:32:53 2018

No, honestly. My excuse this week for no NixWRT progress is that I

have been removing all the turf from the dead lawn in preparation for

putting down something greener.

But I will have to get back to it soon. I've just learnt that my

NixCon

talk proposal

has been accepted, so I had better get it into a usable state soon if

only so I have something to talk about.

Epsilon has progressed marginally: the search box now has a magnifying glass icon in it

Revisiting Clojure Nix packaging#

Fri Aug 31 11:43:27 2018

Slightly over a year ago I wrote about how to build a Clojure project with Nix and this week I had occasion to refer back to it because I want to have a production-style dogfood build of Epsilon

It's a bit simpler now than it was then, because in the intervening

time Clojure has added Deps and CLI

tools which gives us a simple

readable EDN file in which we can list our project's immediate

dependencies (see here deps.edn for

Epsilon ) and

a function resolve-deps that we can call to get the transitive

dependencies

We embed this function call into a script which dumps a JSON file with

the same information, and then whenever we add or update deps.edn we run

$ CLJ_CONFIG=. CLJ_CACHE=. nix-shell -p clojure jre --run \

"clojure -R:build -C:build -Srepro -Sforce -m nixtract generated/nix-deps.json"

(Note that the clojure command will fail silently if it cannot find

a java runtime on the path, or if the current directory contains a

malformed deps.edn file. Each of these problems took me a while to

find - I tell you this so that you may learn from my mistakes)

The second half of the puzzle - build time - is not much changed from

how we did it last time around, though I did take the opportunity to

make it use the generic builder instead of making its own

builder.sh.

Probably I should point out that this downloads all the dependencies

into /nix/store as JARs and builds a classpath so the JRE can find

them - it doesn't make an uberjar. This fits my current use case,

because I want to run it on a NixOS box and separate jars provides at

least the possibility that some of them might be shared by more than

one app. I will obviously have to come back to this if/when I need to

build an uberjar for distribution.

Also, if you are doing advanced things with CLJS external libraries

(like, Node dependencies and stuff) then this will not help and you

are on your own. There is a name for the (CL)JS dependency ecosystem,

it's a compound word in which the first part is the collective noun

for a collection of stars, and the second part rhymes with "duck"